Learning about learning

|

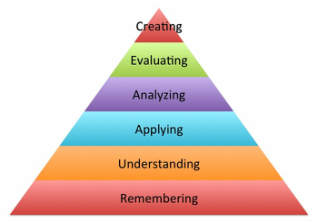

As an elearning manager, I have reviewed a lot of elearning during my career. Much of it dumps content out there followed by multiple choice questions. If training is learning new skills, then why aren't we testing the skills they are supposed to learn in our programs? What is the difference between what we do and what marketers do in content marketing? As Harold Stolovich would say, "Tellin' ain't trainin' and trainin' ain't performance." After considerable noodling, I've come up with four reasons why we assess learning so poorly.

1 Comment

I love Chris Pappas. At least I love his work to support the world of elearning. His blog post yesterday in the eLearningIndustry blog Tips to Use Learners' Imagination in eLearning hit a nerve for me. I've been slogging through a project management course for PMP certification and am getting to see the torture that we put our learners through when we assemble uninspired elearning. Don't get me wrong. The course is very professionally produced. There is video footage and synchronized slides expounding on the vocabulary of project management. The lectures don't repeat word-for-word what is on the slide, but rather support the intended message. They are chunked out into 5-8 minute segments. As far as lecture production goes, the course gets an A. However, after nearly two hours of this format, I have not done a thing to apply the project management vocabulary I've being "taught". I feel like I'm sitting in a lecture hall listening to a professor drone on and on, despite the professional actors' they have used to present the information. Have I learned anything? Not really, and that's not just because I've been doing project management for 20 years. I also don't think it's because I'm not taking copious notes on the talker and his slides. It's because the goal of this course appears to be to provide the mandatory 35 hours of prep time to sit for the PMP exam, which from everything else I've seen, is four hours of answering multiple choice questions about this kind of content. Some of the prep sites I've seen include math related analysis questions, so I live in hope that we shall leave the halls of the lectures and apply things soon. what should they be able to do?This kind of course design flies in the face of good instructional design because there is no application of the learning. There's also no engagement with the learner. The production values are great, and I suspect cost a fair amount of money based on the price tag for the prep. But if in the end, I can't DO project management, and can only pass a test, what have I achieved? I start every learning project by making my SME answer one question: At the end, what should the learner know and be able to do? If the subject matter expert cannot answer that in an single sentence, they do not know what they want. Whether it's curriculum, a module or a course, if we don't have the learner DOING at the end, why are they learning it? Imagine ThisAfter reading Chris' article, I imagined many possibilities for this boring PM course.

The list goes on. I have to wonder. There must be explanations why the designers never thought about the learner when they built this.

There are 33 more hours left of this course. I'm not terribly hopeful that there will be wonders in store for me. active learners are better learnersThe maker movement has been gaining a foothold in K12, which makes sense. Kids like to experiment and figure things out. If you're new to the Maker Movement, here's a great primer article from ASCD's Educational Leadership called Tinkering is Serious Play. Here's an excerpt from the article with an interesting set of definitions as to what it looks like from the framework of the Tinkering Studio at the Exploratorium, a museum in San Francisco. What learning in Tinkering looks like |

| Engagement

| Social Scaffolding

|

so what?

I am a huge proponent of active learning. I work hard to create activities that will help students grasp the concepts and apply them so there is transference. Problem-centered learning, group work and scenario-based learning are two ways to accomplish this in online learning, but that is largely about thinking. It applies the same skills. How can we leverage more of the maker movement in our online classes in higher ed and in our corporate training?

I'd love your thoughts.

I'd love your thoughts.

Jean Marrapodi

Teacher by training, learner by design.

Archives

January 2018

July 2017

November 2016

October 2016

July 2016

May 2016

April 2016

March 2016

February 2016

July 2015

May 2015

March 2015

February 2015

January 2015

December 2014

Categories

All

Active Learning

Assessment

Bible

Christmas

Conferences

Customer Service

Design

ELearning

Learning

Learning Theory

Maker Movement

Needs Assessment

Performance Consulting

Rebels

Reflections

SMEs

Talent Development

Talent Management

Training

Writing

Conference

|

Company

|

|

RSS Feed

RSS Feed